Editorial-design sweep, structured score submissions, lineage build-out.

Largest single-day visual rebrand to date. ~50 pages migrated from the legacy dark-Tailwind layout to the editorial .cs design system: /tasks/* leaderboards (document-ocr, code-generation, text-to-image, text-to-speech), /llm/* benchmark hubs (coding-benchmarks, humaneval-mbpp, gsm8k-math, reasoning-benchmarks), /agentic/* head-to-heads (Aider/Devin/Codex/Cursor vs Claude Code), /speech/* TTS comparisons (ElevenLabs/Cartesia/OpenAI/Google + audiobook guide), and the bulk of /ocr/* (vendor profiles, head-to-heads, best-for guides, brand pages with sub-routes, meta and decision pages, deep-dive benchmarks). Each page wraps in `

Registry as product — /api/sota, coding lineage, contamination-tax methodology.

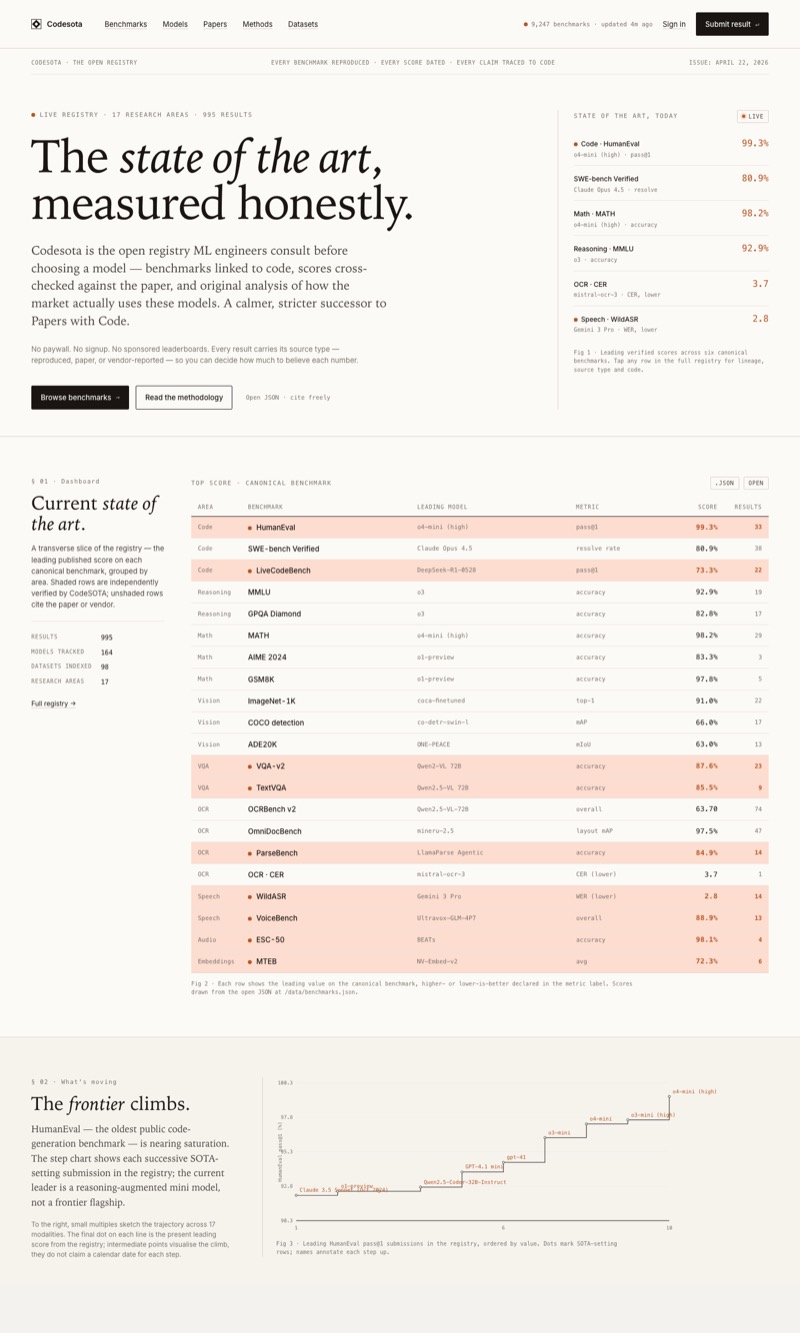

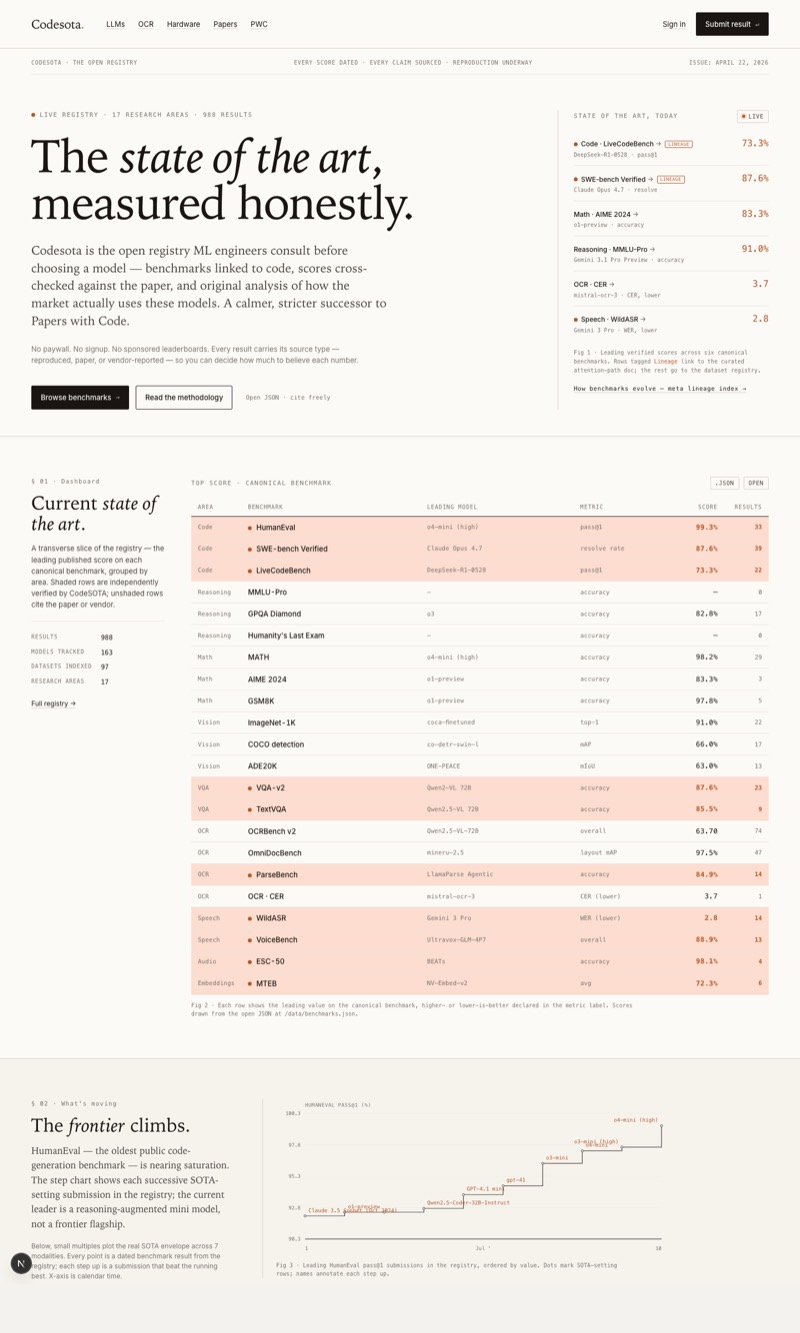

Three threads, one direction: Codesota becomes a callable registry instead of a static reference. /api/sota is live — a free, agent-discoverable, CORS-open JSON endpoint that returns the current dated, sourced SOTA pick per task with full provenance and a stable snapshot_id you can cache against. Short aliases accepted (/api/sota/ocr → document-ocr, /api/sota/code → code-generation, plus asr, tts, vqa, caption, t2i, t2v). Reserved fields (provider_hints, cost_per_1k_usd, benchmark_version) publish as null in v0.1 — better than fabricating them. Documented at /api-landing/sota with full schema, error codes, CORS, rate-limit policy, and the S&P-vs-broker framing that says we explicitly do not route inference. Coding lineage added to public/data/lineages — HumanEval → HumanEval+ → LiveCodeBench → SWE-bench → SWE-bench Verified → SWE-bench Pro, with statuses tracking the Sep 2025 OpenAI announcement that Verified is contaminated. LineageStrip component renders predecessors / current / successors on every benchmark page where the dataset_id appears in any lineage. /methodology § 11 Contamination tax added — the gold-vs-independent two-score framework, OmniDocBench named as first target. robots.txt allowlists /api/sota/ for AI and search crawlers; llms.txt documents the schema so LLMs grounding answers in the site find the contract.

Navigation hygiene, /benchmark crash fix, /ocr hero rewrite.

Inbound-link audit found dozens of orphans. Footer rebalanced: Domains gained Speech-to-Text and Text-to-Speech; Tools gained OCR Benchmark Priority, Polish OCR, Polish LLM, and the new /api/sota docs link; Codesota gained Benchmark Lineages and Activity Log. /llm index now links its six SEO sub-pages (coding-benchmarks, humaneval-mbpp, gsm8k-math, math-benchmarks, reasoning-benchmarks, open-models) under a new Deep-dives section. /trending added to robots disallow — internal pageview dashboard, not search-discoverable. Dead code removed: src/components/SiteFooter.tsx + Header.tsx (271 LOC, unused — the live footer is in app/landing/components.tsx); /iceberg and /beta page redirects moved to next.config.ts as permanent 308s and the page files deleted. Bug fix: /benchmark/[id] crashed client-side on livecodebench and ocr-cer-benchmark — Postgres NUMERIC columns serialized as strings via the Neon driver under UNION ALL, breaking Recharts math on TrendChart hydration. Fixed with ::float casts in all three union branches and a parseFloat fallback in JS. /ocr hero dropped "the traffic king of our registry" for content-first framing. Every plain-text model mention on /ocr now resolves to /model/{id} via ModelRef + Prose, with vendor hints expanded to cover OCR-specific names (tesseract, easyocr, trocr, nougat, docling, chandra, donut, glm-ocr, dots.ocr) — works site-wide, not just on /ocr. /papers-with-code softened from "every benchmark is reproduced" to "every benchmark is traceable" — acknowledges that closed APIs and non-deterministic outputs need dated source links and prompt templates rather than literal code reproduction.

Fourteen new guides across code, speech, TTS, RAG, agentic, segmentation.

A single expansion of editorial coverage: code generation (Claude Opus 4, GPT-5, Gemini, DeepSeek-V3, Qwen2.5-Coder on HumanEval, SWE-bench, LiveCodeBench); speech recognition (Whisper vs Gemini vs AssemblyAI vs Deepgram); open-source TTS (Kokoro, XTTS v2, Bark, Piper, Fish Speech, Dia, F5-TTS); RAG vs fine-tuning vs long context; agentic benchmarks (SWE-bench, RE-bench, HCAST, WebArena, GAIA, OSWorld); image segmentation (SAM 2, Mask2Former, OneFormer); industrial anomaly detection; time-series forecasting; multimodal state; graph neural networks; medical AI regulation; RL history. Guides index updated with six new categories.

Benchmark relevance audit across all twelve research areas.

Full audit of the computer-vision benchmarks — ImageNet and IIIT5K marked saturated, RVL-CDIP and PubLayNet declining, ADE20K and OmniDocBench preferred. Three new datasets added: LVIS v1.0, DocLayNet, Union14M. COCO detection expanded from 5 → 17 entries (Co-DETR 66.0 mAP leads). ADE20K segmentation 2 → 13 entries (ONE-PEACE 63.0 leads). NLP populated from zero with 53 results across SQuAD v2, GLUE, SuperGLUE, SNLI, CoNLL-2003, CNN/DailyMail. Speech populated from zero with 30 results (LibriSpeech 1.46% WER). Multimodal populated from zero with 37 results. Agentic built from scratch: 36 results across SWE-bench Verified, METR, HCAST, RE-Bench, WebArena, OSWorld. Three empty tasks removed (Polish OCR, LaTeX OCR, Key Information Extraction).

Arena rankings, Academy deep-dives, MTEB editorial.

Seven Arena pages (Text, Code, Vision, Document, Search, Text-to-Image, Text-to-Video) sourced from arena.ai, with category cards, provider dominance table, and a Pareto frontier on Search. Three Academy deep-dives: Matrix Operations, How Transformers Work, Embedding Dimensions. MTEB benchmark page rewritten with HuggingFace mteb.ResultCache data (705 models). Tokens & Context page updated with 2025 pricing. Embedding lesson updated with MMTEB leaderboard data (KaLM-Gemma3-12B at 72.32).

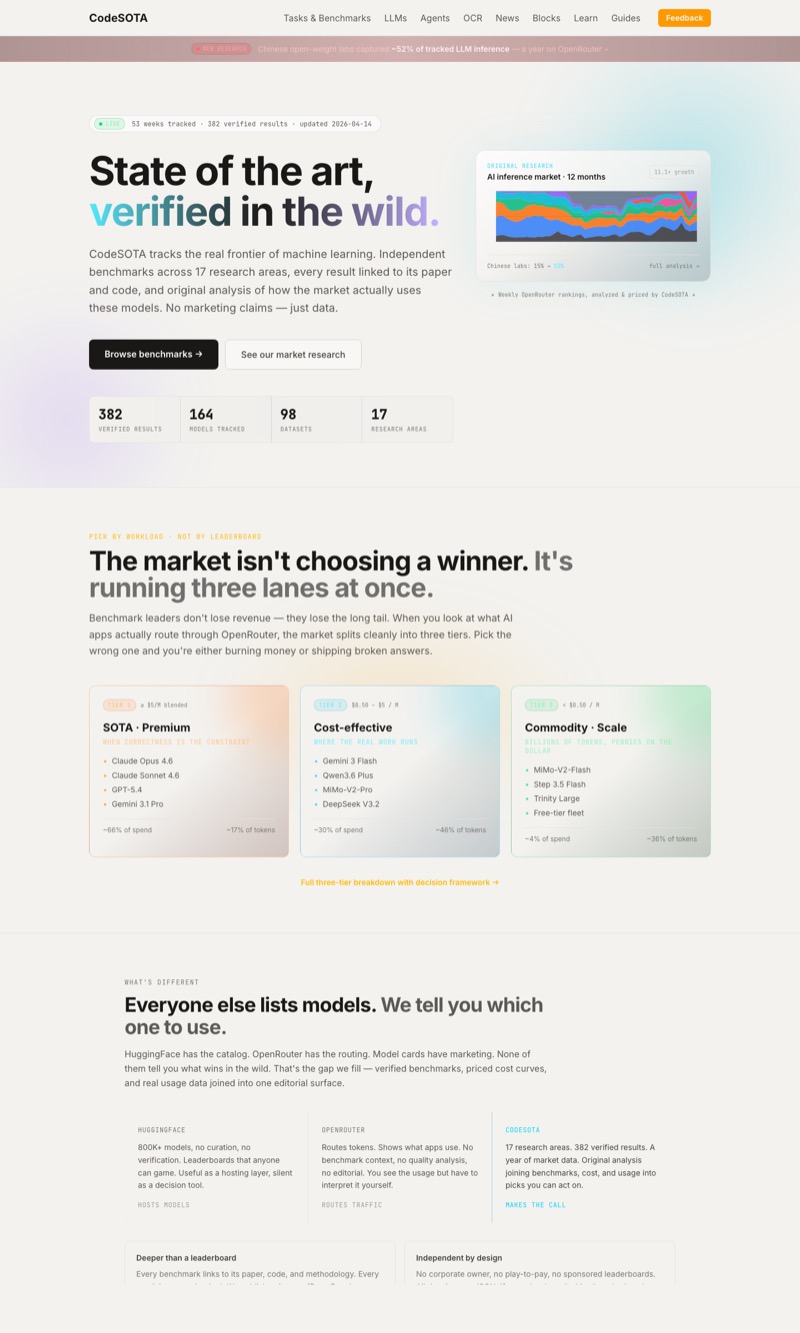

Site redesign — cleaner grid, legible snippets.

Homepage redrawn from 1,051 to 260 lines. /browse, area templates, /news, /flywheel and /papers-with-code all pulled onto the same system: 1px-gap grids, mono labels, no gradients. Gradient text-transparent removed site-wide so headings render in Google featured snippets. Footer restructured into six columns covering forty-plus previously-orphaned pages. Seven new area editorials (adversarial, graphs, industrial, knowledge-base, methodology, RL, time-series). Explainers moved to the footer; dead Twitter link removed.

Research challenges — 42 paid bounties.

New /challenges page with 42 research tasks ranging from Easy ($1) to Legendary ($16), totalling $280 in bounties. Tasks span data collection, cross-paper analysis, benchmark reproduction, and original research, and are designed to fill the registry's "needs research" gaps. Every deliverable is published on Codesota with contributor attribution.

Handwriting OCR rewrite, robotics visuals.

Handwriting OCR page rewritten with SVG bounding-box illustrations and side-by-side model output comparisons. GPT-4o confirmed as new handwriting SOTA at 1.69% CER on IAM (arXiv 2503.15195), beating traditional HTR models. Eleven models benchmarked with 2026 data (DTrOCR, GOT-OCR 2.0, Qwen2.5-VL, DLoRA-TrOCR). Robotics page gained Canvas bar/radar charts, an SVG timeline, and a pipeline diagram. MMLU SOTA progress-bar scaling fixed.

Mass benchmark refresh — 24 leaderboards pulled to 2024-2026 data.

AudioSet: SSLAM new SOTA at 0.502 mAP (ICLR 2025). LibriSpeech: Parakeet RNNT 1.1B leads test-clean at 1.46% WER. COCO Captions: PaLI-X-55B at 149.2 CIDEr. VQA v2.0: PaLI-X 55B at 86.1%. MuJoCo: TD-MPC2 317M at 960 normalized (ICLR 2024). ESC-50: OmniVec2 99.1% (CVPR 2024). SCUT-CTW1500: TextMamba at 89.7. ICDAR 2015, M4, CIFAR-100, LJ Speech, VCTK, OGB and Cora seeded with verified results. /browse made collapsible, area sections sorted by latest update. Priority driven by /trending page-view data.

Hallucination detection rebuilt, submission flow simplified.

Hallucination-detection explainer rewritten against the Vectara HHEM leaderboard (87 models, March 2026). Finding: reasoning models (o3-pro 23.3%, o4-mini 18.6%) hallucinate 2–3× more than standard models on summarization. Best hallucination rate improved from 8.5% (early 2024) to 1.8% (Finix S1 32B, March 2026). Five production Python examples using DeBERTa-v3 NLI, selfcheckgpt, RAGAS v0.2+, factscore, FAISS+NLI. Submit page compressed from a 10-field paper-submission form to a 5-field topic suggestion.

HumanEval editorial, view tracking, Hardparse promotion.

New /benchmark/humaneval editorial: the full history of 60+ models from Codex at 28.8% (2021) to 99% saturation. Site-wide passive page-view counter plus a /trending leaderboard grouped by area, powered by /api/views with daily IP deduplication. Hardparse product page at /ocr/hardparse with benchmark comparison and dual CTA (Mac App + API); CTA banner placed on ten OCR pages. LLM page updated with real OpenRouter rankings. Article reactions system (insightful, practical, surprising, needs-update) added to editorial pages.

Building Blocks — factory architecture diagrams.

Factory View replaced simple cards with denser operations plots. Mermaid architecture diagram added to /building-blocks with shared bus, ingress, processing stages and delivery nodes. Control lanes (observability, cost guardrails, cache reuse) made visible. Per-pipeline complexity dashboard: assembler counts, implementation counts, modality breadth, complexity index.

LLM and hardware pages refreshed for 2026.

LLM benchmarks page updated with 2026 SOTA across 16 benchmarks (Claude 4.6, GPT-5, Llama 4, Gemini 2.5); SWE-bench figure moved from 49% to 80.9%. Hardware GPU guide rewritten with RTX 5090/5080 specs, H200/B200 data-centre GPUs, buy-vs-rent analysis and an Apple Silicon section; expanded from 516 to 1,114 lines. Best OCR for Python updated with Surya and DocTR alongside PaddleOCR, Tesseract, EasyOCR, RapidOCR, with live benchmark numbers. HumanEval leaderboard updated with current SOTA (96.3%) and saturation noted.

Seven new benchmark editorials.

ImageNet (SOTA timeline 63.3% → 92.7%, transfer-learning matrix); COCO (object-detection leaderboard, AP breakdown, detection pipeline); SQuAD (F1/EM scores, reading-comprehension analysis); GLUE/SuperGLUE (saturation analysis, task breakdown); GSM8K (math reasoning, chain-of-thought analysis); HumanEval (pass@1 leaderboard, language coverage); SWE-bench Code Generation (code models vs agent scaffolds). Twenty-plus custom visualizations generated across the pages.

SWE-bench, OmniDocBench and ADE20K editorials.

SWE-bench editorial: SOTA timeline from Claude 2 at 1.96% to Claude Opus 4.5 at 80.9%, 15-model leaderboard, 8 key papers. OmniDocBench: Shanghai AI Lab CVPR 2025 document-parsing benchmark with full leaderboard and metric breakdowns. ADE20K rebuilt with real dataset images, six matplotlib visualizations, and a 23-model leaderboard. New /find page: a four-step benchmark finder wizard.

Eleven interactive paradox explainers.

A run of 3Blue1Brown-style interactive explainers on mathematical paradoxes: Stein's Paradox, Will Rogers Phenomenon, Berkson's Paradox, Low Birth Weight Paradox, Schelling's Segregation (agent-based model), Ross-Littlewood, Banach-Tarski, Newcomb's, Arrow's Impossibility Theorem, the Cobra Effect, and Grossman-Stiglitz.

Rys OCR — Polish SOTA research preview.

First fine-tune of a Polish OCR model released on HuggingFace. LoRA fine-tune on PaddleOCR-VL base model; 71.3% CER reduction and 46.1% WER reduction on Polish text; optimised for Polish diacritics; runs on 4–6 GB VRAM. Apache 2.0 license. Trained on 10,000 synthetic Polish document images.

Twenty-one new interactive explainers.

Face anonymization, PII detection, text reranking (bi-encoder vs cross-encoder), hallucination detection, hybrid retrieval (BM25 + dense fusion), controllable generation, chart understanding, question answering, long-context summarization, video-to-text, code generation, and several audio/video capabilities. Fifty-plus building blocks now have interactive explainers.

Next.js 16 migration and OCR labeling platform.

Stack migrated from Astro to Next.js 16.1.1 with the App Router. New OCR Labeling Platform: upload images, get bounding boxes from DOTS OCR (Replicate), correct and submit. Twenty-seven interactive explainer components migrated to React. CodeBlock component with Prism.js and .ipynb download. Dynamic routes fixed for Next.js 15+ async params. New /benchmark/[id] and /[area]/compare/[...slug] pages.

Ten SOTA editorials across major AI areas.

Practitioner editorials for Speech (Whisper, Conformer, XTTS), NLP (GPT-5, Claude 3.5, DeepSeek-V3), Computer Code (SWE-bench leaders, RLVR training), Reasoning (o3/o4-mini, test-time compute), Multimodal (InternVL3, Molmo 2), Agentic AI (METR, MCP/A2A), Audio (Suno v4.5, MSEB, mHuBERT), Robotics (OpenVLA 7B, COLOSSEUM brittleness), and Medical (GPT-4o USMLE 90.4%, BoltzGen). Citations drawn from NeurIPS, ICML, CVPR, ACL.

The Zen of AI Composition — free PDF released.

The book is now available as a free PDF download, no email required. Three parts: Nature of Composition, Transformations, Practice. Download counter tracks interest.

The Zen of AI Composition — early access.

Landing page and double opt-in signup (via Resend) for the book. Admin notifications on confirmed signups.

Model comparator, verification protocol, intent analytics.

Interactive model comparator: pick 2–4 OCR models, compare across eight metrics including failure modes (diacritics, tables, stamps, handwriting, low quality); shareable URLs. Verification Protocol page: five-step benchmark verification process, verified-badge schema (dataset hash, prompt/config, runtime, cost, metric code), three verification tiers. Decision-intent analytics (scroll depth, time on page, CTA clicks, outbound tracking). Atropos LLM RL guide added. Standalone OCR evaluation script for testing vision models on OCR-VQA.

OCR decision platform.

New canonical /ocr/decision page organised around a failure taxonomy (diacritics, column bleed, numeric substitution, table collapse, stamp interference) rather than accuracy percentages. Homepage reframed around OCR with a 90-second clarity test (Who / What / Why / Next). Independence and conflict-of-interest policy added to the methodology page. EvaluationCTA component added to five comparison pages.

Agentic AI benchmarks — METR time horizon.

New Agentic AI page tracking METR benchmarks on autonomous AI capability. Time Horizon leaderboard: GPT-5.1-Codex-Max (160 min), GPT-5, o1-preview, Claude 3. HCAST, RE-Bench, SWAA task-suite breakdowns. Interactive benchmark saturation chart with category views. 27 benchmarks across 8 categories, including the new Agentic category.

Six more interactive explainers.

Image captioning (LLaVA, Qwen2-VL, BLIP-2, GPT-4V). Text-to-video (Sora, Runway Gen-3, CogVideoX, Diffusion Transformer). Image-to-image (inpainting, outpainting, super-resolution, ControlNet, IP-Adapter). Text-to-3D (DreamFusion, Shap-E, MVDream, LGM, Score Distillation Sampling). Image-to-video (Stable Video Diffusion, AnimateDiff, LivePortrait, Runway API). Depth estimation enhanced with real example images.

Eight building-block explainers.

Object detection (YOLO v1–v11, NMS, two-stage vs single-stage, mAP). Image segmentation (SAM 2, semantic/instance/panoptic types, Mask2Former). Depth estimation (Depth Anything v2, ZoeDepth, Marigold). Image-to-3D (Gaussian Splatting, NeRF, Trellis). Speech recognition (Whisper, turbo vs large-v3, faster-whisper, diarization).

Modular benchmark runner; Mistral OCR 2512 verified.

New modular benchmark-runner system with pluggable backends (Mistral OCR, OCRBench v2, OmniDocBench). Mistral OCR 2512 verified: 9 pages in 7.37 seconds. HTTP API daemon for remote GPU benchmark execution. Checkpoint-based resumable runs. Automated results sync to the site data files.

LLM and TTS interactive explainers.

LLM explainer: tokenization, embeddings, attention, next-token prediction, transformer architecture — with interactive canvas visualizations (BPE demo, clickable attention matrix, probability distributions). TTS explainer: text normalization, G2P, prosody, acoustic models, mel spectrograms, vocoders, zero-shot voice cloning (speaker embedding, in-context learning, fine-tuning). Neural codec language models (VALL-E, ElevenLabs-style) covered.

Building Blocks and editorial guides.

Building Blocks: a taxonomy of modular AI capabilities (image-to-vector, text-to-vector, etc.). Editorial guides for three personas — Executives (Document Processing Technology Matrix), Enthusiasts (SOTA Tracker), Researchers (ML Landscape 2025). Data Flywheel page explaining community-driven benchmark growth. LLM and Object Detection hubs added. Papers-with-Code archive integrated: 1,519 papers, 464 models, 145 datasets.

Papers with Code page SEO; production auth.

Papers-with-Code alternative page tuned for search: optimised title, meta description, FAQ section, internal links. Clerk switched to production mode with GitHub OAuth. User work-profile preferences added to the dashboard. Sitemap corrected to the www domain. Custom analytics removed in favour of Vercel Analytics.

User accounts via Clerk.

Authentication via Clerk with GitHub OAuth; protected dashboard for authenticated users; sign-in and sign-up pages styled for the dark theme.

Polish OCR benchmark (internal).

A Codesota-built Polish OCR benchmark: 1,000 synthetic and real Polish text images with ground truth across four categories (synth_random, synth_words, real_corpus, wikipedia) and five degradation levels. Tesseract 5.5.1 baseline: 26.3% CER overall. Contamination-resistant design: synthetic categories show 10× worse performance than real text, exposing reliance on statistical language models (52% vs 5% CER).

Mistral OCR 3 added.

Mistral OCR 3 (mistral-ocr-2512) added to the benchmarks with a dedicated review page: pricing, code examples, comparisons against GPT-4o and PaddleOCR. Vendor-claimed 94.9% accuracy and a 74% win rate over OCR 2 are recorded as vendor claims; $2 per 1,000 pages ($1 with batch API).

Featured guides on the homepage.

Homepage gains an "In-Depth Comparisons" section with image cards pointing to six editorial guides: PaddleOCR vs Tesseract, GPT-4o vs PaddleOCR, Best OCR for Invoices, Best OCR for Handwriting, Audio AI Benchmarks, and Chest X-ray AI Models.

Audio AI — classification, music generation.

Three editorial pages on Audio: classification (BEATs at 0.498 mAP on AudioSet, 98.1% on ESC-50), music generation (Suno, Udio, MusicGen, Stable Audio), and audio understanding (Qwen2-Audio, SALMONN). Seven custom visualizations; metrics explainer (mAP, FAD, MOS, CLAP).

GPU hardware benchmarks.

RTX 3090 vs 4090 vs 5090 for ML workloads: LLM inference (Llama 3, Mistral) in tokens/sec, image generation (SDXL, Flux, SD 1.5), training (LoRA, YOLO, ResNet), VRAM-fit guide, cloud pricing across RunPod, vast.ai and Lambda Labs.

Polish OCR benchmarks page.

Four Polish OCR datasets (PolEval 2021, IMPACT-PSNC, reVISION, Polish EMNIST). Models covered: Tesseract Polish, ABBYY FineReader, HerBERT, Polish RoBERTa. Best CER 2.1% on PolEval 2021; 97.5% word accuracy on IMPACT. Covers diacritics and gothic-font recognition.

Industrial anomaly detection.

Eight industrial datasets (MVTec AD, VisA, weld defects, steel defects) and twelve models (PatchCore, EfficientAD, SimpleNet, FastFlow). Best AUROC 99.6% (SimpleNet on MVTec AD). Three approach families covered: Memory Bank, Normalizing Flows, Student-Teacher.

Chest X-ray AI — radiology benchmarks.

Seven chest X-ray datasets (CheXpert, MIMIC-CXR, NIH ChestX-ray14, VinDr-CXR, PadChest, RSNA, COVID-19). Fifteen radiology AI models (CheXNet, CheXzero, TorchXRayVision, MedCLIP, GLoRIA, BioViL). 20+ AUC results across datasets. Cross-dataset comparison chart and a DICOM → multi-label-classification pipeline explainer.

SEO and accessibility fixes.

schema.org/Dataset on benchmark pages for Google Dataset Search. Dynamic meta descriptions that include current SOTA model and score. FAQPage schema on Speech and Code Generation pages. Canvas accessibility: aria-labels and fallback text on the DocumentScanner. BreadcrumbList schema added for navigation.

Six new verticals.

NLP (GLUE, SuperGLUE, SQuAD with 20+ models); Speech (Whisper vs Azure, LibriSpeech); Multimodal (VQA, image captioning, GPT-4V vs Gemini); Reasoning (MATH, GSM8K, GPQA, o1 vs GPT-4); LLM comparison hub (GPT-4 vs Claude); Code generation (best-for Python, JavaScript, debugging guides); OCR expansion (receipts, tables, multilingual).

OCR Arena — speed vs quality scatter plot.

Interactive scatter plot of ELO score vs latency for 18 models from OCR Arena human preference rankings. Open-source models coloured green, closed/API models coloured red. Pareto-optimal models highlighted.

Codesota meta-benchmark score.

Aggregate OCR score across seven benchmarks with weighted scoring: primary (OmniDocBench, OCRBench v2, olmOCR-Bench) at 3×, secondary (CC-OCR) at 2×, language-specific at 1×. Interactive models-vs-benchmarks heatmap shows coverage gaps and a testing-priority list for contributors.

Papers with Code database integration.

1,500+ benchmark results imported from the Papers-with-Code archive. SOTA timelines rendered as hill-climbing charts. 146 datasets, 464 models, 15 research areas and 70+ tasks indexed. NLP (9 tasks), Reasoning (5), Code (6), Speech (5) among the populated areas.

Papers with Code — the story.

Full editorial on Papers with Code, 2018–2025: what it was, why it mattered, what was lost when Meta retired it in July 2025, and why Codesota is attempting to fill the gap. Cost-vs-quality frontier chart added to the vendors page.

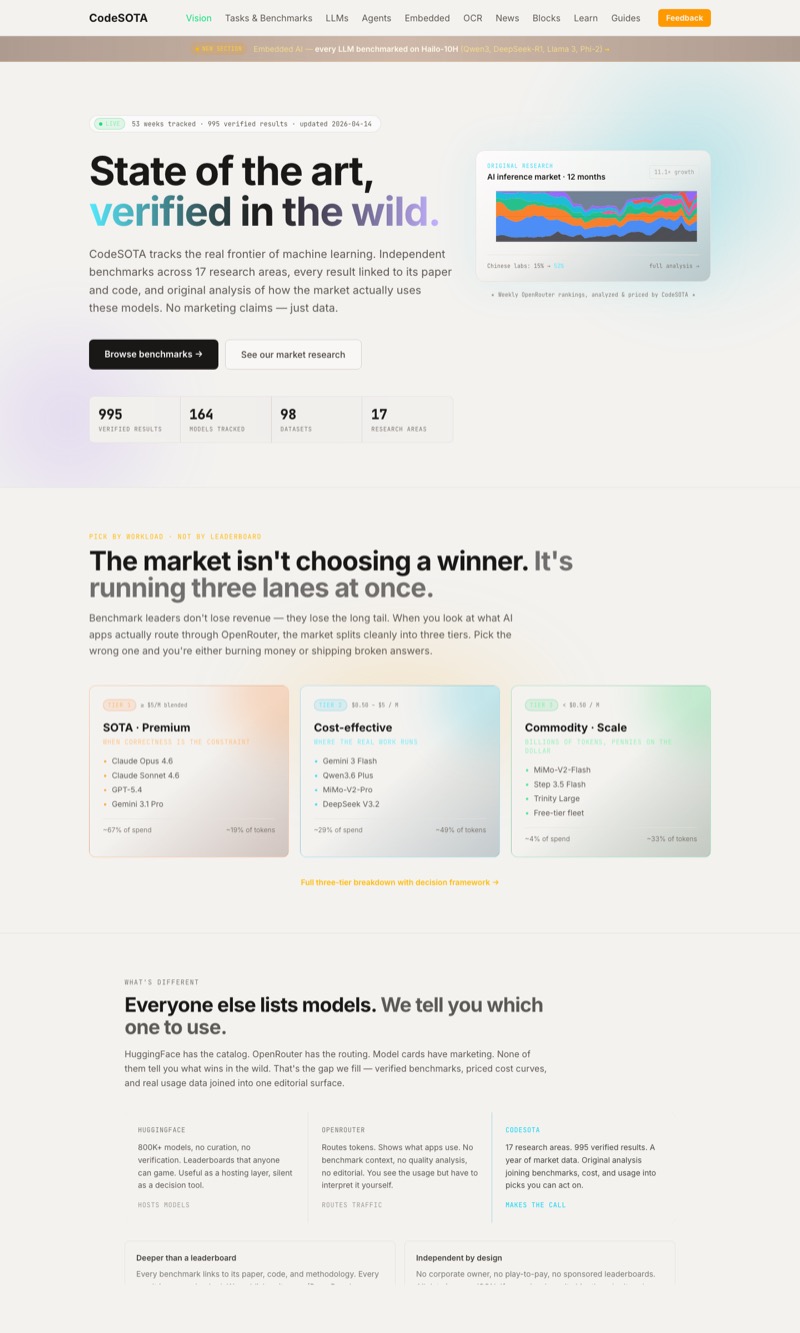

Homepage redesign; OCR vendors page.

Homepage reframed as "State of the Art, Verified", positioning Codesota as a Papers-with-Code successor. New OCR vendors page with nine vendors (Mistral, Docling, GPT-4o, PaddleOCR, Tesseract, Google Doc AI, Azure, doctr, Chandra) and a decision matrix by use case.

Mistral OCR documentation.

Mistral OCR API guide with Python examples. Vendor claims recorded as claims: 94.9% accuracy, 2,000 pages/min, $0.001/page. Independent testing caveats noted. Mistral vs Docling comparison table.

Docling tutorial — verified.

Every code block in the Docling tutorial has been executed on real documents. Real outputs: 33,201 characters of markdown from a 10-page PDF in 34.95 s; three tables extracted with CSV export. Artifacts available for download. Performance measured on Apple Silicon with MPS acceleration.

Docling documentation added.

Full Docling documentation following the Diataxis framework: tutorial (PDF to Markdown), how-to guides (OCR engines, table extraction, RAG integration), technical reference (API, model specs), and an architecture-level explanation.

Chandra OCR benchmark data.

Chandra OCR 0.1.0 added. Current top performer on olmOCR-Bench at 83.1%. Comparison data against PaddleOCR-VL, MinerU, Marker.

Document scanner tutorial.

A full document-scanning pipeline with OpenCV: edge detection, perspective correction, enhancement. Interactive demo with sample images and an integration guide for downstream OCR engines.

Codesota launches.

OCR benchmark leaderboard with eight major benchmarks. State-of-the-art results from 50+ models. Methodology documentation and comparison pages (PaddleOCR vs Tesseract, GPT-4o vs PaddleOCR). The stated goal from day one: verify vendor claims independently and help readers choose the right tools.