The Benchmark that Changed Everything

Before ImageNet, computer vision datasets were small and specialized. In 2009, researchers from Stanford and Princeton introduced a dataset of unprecedented scale, organized according to the WordNet hierarchy.

The annual ILSVRC competition (2010–2017) provided a standardized evaluation framework that allowed researchers to compare architectures fairly. The 2012 victory of AlexNet marked the definitive shift from hand-crafted features (like SIFT) to end-to-end learned representations via Convolutional Neural Networks (CNNs).

Key Innovations

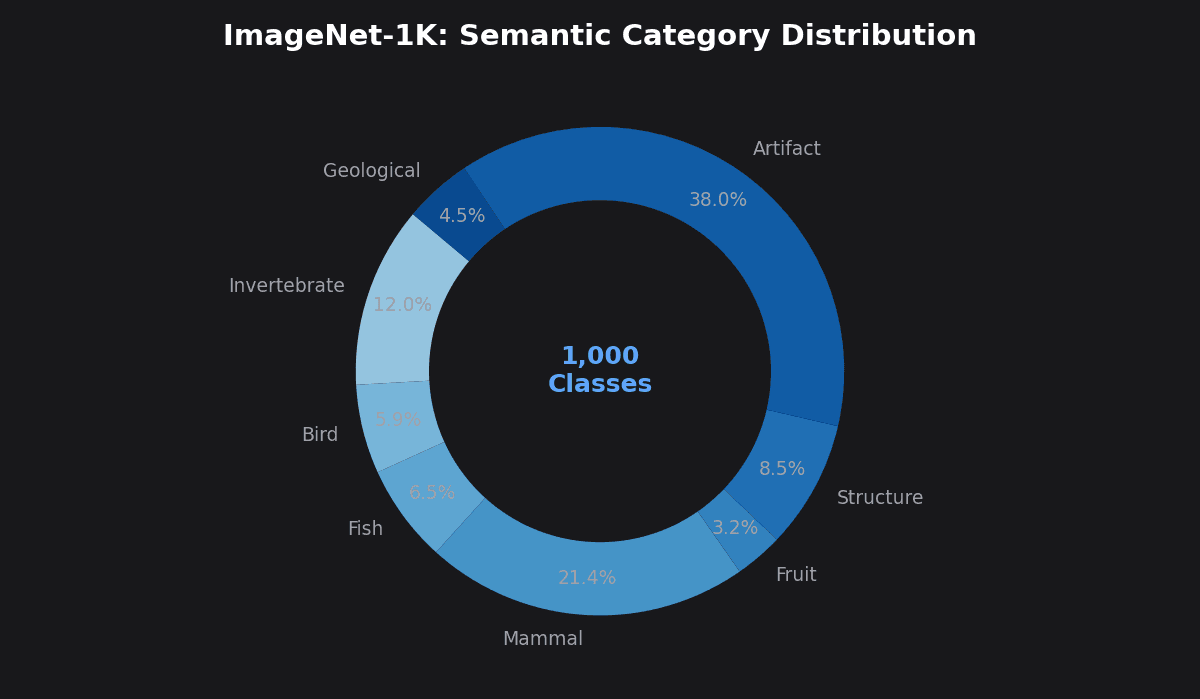

- ● Standardized 1,000-class subset for reproducible research.

- ● Hierarchical structure enabling fine-grained classification.

- ● Established Top-1 and Top-5 error as industry-standard metrics.

VISUALIZATION 01

Synset Hierarchy: From "Mammal" to "Golden Retriever"

Accuracy Evolution

The rapid rise of Top-1 accuracy (Top-5 for pre-2022 era) over the ILSVRC era and beyond.

Current SOTA Leaderboard

| Rank | Model Architecture | Top-1 Acc | Date | Resources |

|---|---|---|---|---|

| 1 | CoCa (ViT-G/14) Google · Finetuned; 2.1B params | 91.000% | 2022-05 | |

| 2 | SoViT-400M/14 Google · Compute-optimal ViT shape | 90.300% | 2023-05 | |

| 3 | EVA-02 (ViT-L/14+) BAAI · Finetuned; 304M params, public data only | 90.000% | 2023-03 | |

| 4 | ViT-22B/14 Google · 22B params; finetuned on ImageNet-1K | 89.510% | 2023-02 | |

| 5 | InternViT-6B (InternVL) OpenGVLab · CVPR 2024 Oral; 6B params | 88.200% | 2024-06 | |

| 6 | maxvit_base_tf_512.in1k Google | 86.598% | 2023-04 | |

| 7 | coatnet_2_rw_224.sw_in12k_ft_in1k Google | 86.580% | 2022-09 | |

| 8 | nextvit_large.bd_ssld_6m_in1k_384 ByteDance | 86.542% | 2022-11 | |

| 9 | swin_large.ms_in22k_ft_in1k Microsoft | 86.330% | 2021-03 | |

| 10 | convnext_base.fb_in22k_ft_in1k Meta AI | 86.298% | 2022-01 |

Note: All scores are finetuned Top-1 accuracy on the ImageNet-1K validation set. Most top models use ImageNet-21K or large-scale image-text data for pre-training. Last updated March 2026.

Dataset Variants

While ILSVRC 2012 is the "standard" ImageNet, the ecosystem has expanded to address specific challenges like scale, robustness, and distribution shift.

ImageNet-1K (ILSVRC)

Standard Benchmark

ImageNet-21K

Large-scale Pre-training

ImageNet-v2

Robustness Testing

ImageNet-C / R

Corruption & Rendition

The Evaluation Pipeline

Preprocessing

Resizing to 224x224 or 384x384, center cropping, and normalization.

Inference

Forward pass through the model to generate class logits.

Softmax

Converting logits to a probability distribution over 1,000 classes.

Scoring

Checking if the ground truth label is the top prediction (Top-1).

Implementation & Tools

pytorch/vision

Official PyTorch vision library with pre-trained ImageNet weights.

rwightman/pytorch-image-models

The "timm" library: most comprehensive collection of SOTA ImageNet models.

google-research/vision_transformer

Original JAX implementation of ViT and MLP-Mixer.

facebookresearch/ConvNeXt

Code for "A ConvNet for the 2020s" achieving SOTA.

Foundational Papers

ImageNet: A Large-Scale Hierarchical Image Database

Deng et al. • CVPR 2009

ImageNet Classification with Deep Convolutional Neural Networks

Krizhevsky et al. • NeurIPS 2012

Deep Residual Learning for Image Recognition

He et al. • CVPR 2016

CoCa: Contrastive Captioners are Image-Text Foundation Models

Yu et al. • TMLR 2022

EVA-02: A Visual Representation for Neon Genesis

Fang et al. • BAAI 2023

InternVL: Scaling up Vision Foundation Models

Chen et al. • CVPR 2024 Oral

Top-1 Accuracy

The standard metric for ImageNet. It measures the percentage of test images where the model's highest-probability prediction exactly matches the ground truth label. As of 2024, SOTA models exceed 90% Top-1 accuracy on the 1K validation set.

Top-5 Error

Historically used when classification was more difficult. A "success" is counted if the correct label is among the model's top 5 predictions. This was the primary metric for the original ILSVRC competitions.

Related Benchmarks

| Benchmark | Focus | Scale | Key Difference |

|---|---|---|---|

| CIFAR-10/100 | Small-scale classification | 60k images (32x32) | Low resolution, toy dataset |

| COCO | Detection & Segmentation | 330k images | Focus on object localization |

| PASCAL VOC | Object Recognition | 11k images | Pre-dated ImageNet scale |

Access the ImageNet Dataset

Ready to train your own models? Access the official ImageNet database for research and non-commercial use. Requires registration and institutional affiliation.